Security, as one of the top priorities, cannot rely on merely a single service.

The main idea and reason behind using any kind of firewalls is that, as soon as the project reaches certain level, it begins to attract more and more audience, and that includes attackers whose purpose may be to cause harm by finding various kinds of vulnerabilities, includingdatabase vulnerabilities, cross-site scripting, HTTP flood and many others. Unfortunately, the list is almost endless.

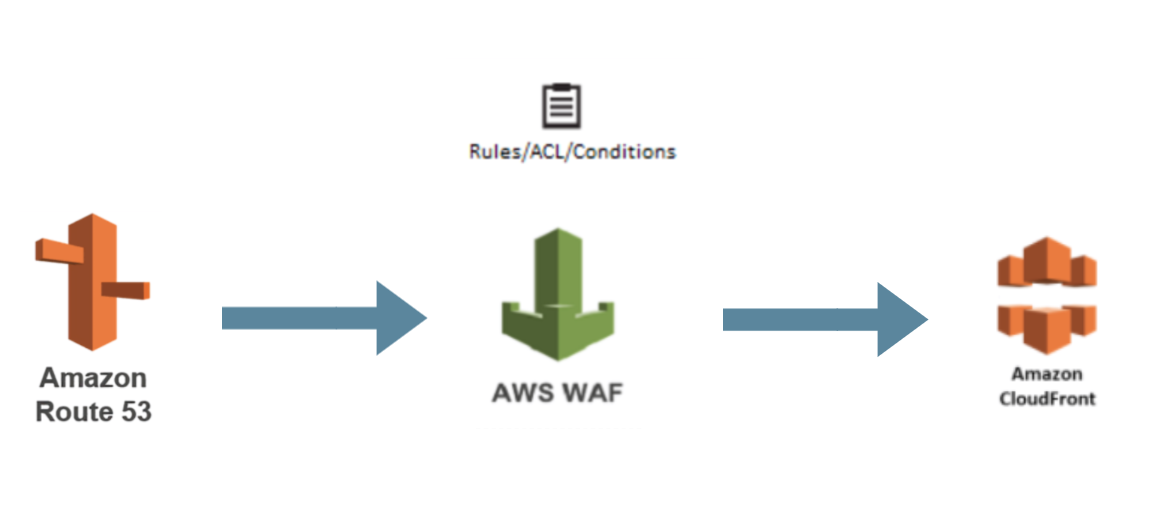

Amazon Web Services has a number of products that are capable of counteringthese kinds of threats, AWS Network Firewall and AWS Web Application Firewall to name but two. The main difference between them, amongmany others, lies in the number of OSI layers, 3-4 and 7, respectively. AWS WAF analyzes communications between external users and web application by blocking malicious requests before they reachusers or web application, and can be associated with resources such as Application Load Balancer, API Gateway, AWS AppSync and CloudFront distributions.

AWS WAF contains various kinds of rules (managed rule groups, own rules and rule groups) and actions that can be potentially applied (allow, block, count). In our project, we decided to use AWS Managed Rules, such as AWSManagedRulesSQLiRuleSet, AWSManagedRulesCommonRuleSet, AWSManagedRulesAmazonIpReputationList, AWSManagedRulesKnownBadInputsRuleSet, as well as our own rules for rate limits. Additionally, AWS Managed Rules include many other subrules, i.e. AWSManagedRulesCommonRuleSet also contain rules against cross-site scripting, size restrictions, bad bots etc.

Undoubtedly and as a matter of good practice, it's better to start writing any used infrastructure as code in the first place.

Example of Terraform code:

Typical logs flow

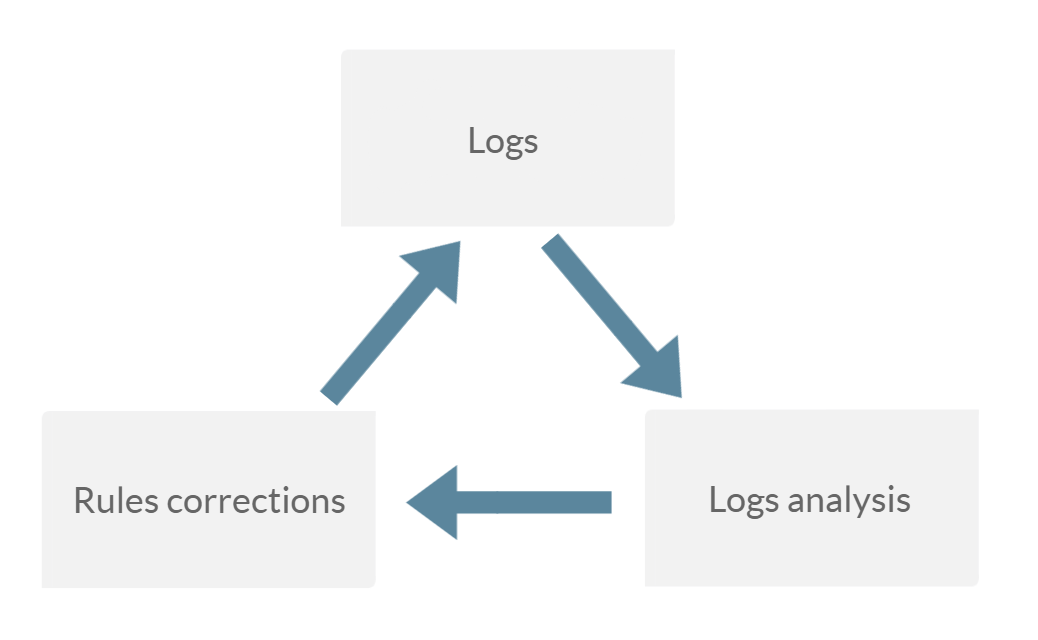

It should also be noted that the use of AWS WAF in real conditions on large projects is a rather time-consuming iterative process, and usually, in this case the blame falls on false positives, can’t be implemented ‘out of the box’. The most common practice is implementation according to the following scheme - collect logs in acount mode, analyze them and correct the AWS WAF rules based on that analysis. The collection of logs is carried out over a certain periodof time which depends on many factors, including traffic.

After the logs get into AWS S3, one of the options for a quite effective analysis is using AWS Athena. This service allows you to create atable from data in a bucket and use SQL queries against it.

Example of logs received from AWS WAF:

AWS Athena table creation (from AWS documentation):

Sample SQL query for analysis

After such analysis, we can understand which sub rules gave the largestnumber of false positives, then correct them and repeat the processof logs collection and analysis. After several iterations, as soon aswe are able to get rid of the overwhelming number of false positives,we can start the implementation in block mode while intensively monitoring the logs, so that in the event of any unforeseensituations, we can have a quick rollback.

In conclusion, it should be noted that security in the current environment should, generally, be one of the top priorities, and cannot be based on one service only. Rather, it should be a mix of services and best security practices, as this allows you to avoid negative consequences for the entire project as a whole.

We'd love to answer your questions and help you thrive in the cloud.